CIRCA:Basic Statistical Analysis

From CIRCA

Contents |

Basic Statistical Analysis

Definition

What are Statistics

Statistics is a quantitative and numerical method that is primarily involved with the collection and interpretation of statistical data. Statistics are frequently used to find relationships between data sets, extracting important properties from data sets, and visualizations.

As Stats are primarily a numerical method, they only produce facts about the numbers themselves and not the objects under study. A statistical relationship is only as good as the validity of the data being analyzed. A firm understanding of both the statistical method and the numerical interpretations are important in order to get the most out of any statistical analysis.

How Statistics Work

Statistics makes a number of very broad assumptions about any data set that is being studied. The most important assumptions will be discussed below.

Random Number

Statistics are generally collected from a representative sample of a larger group of object. Statistics always assumes that each measurement is a single randomly generated value that follows a distribution property associated with the larger group. The most commonly assumed probability distribution is the standard distribution which assumes that most measurements will be close to the classes mean value; variations from the mean are possible but get rarer as distance from the mean increases. One of the main goals of statistics is to find this probability distribution and use it to make inferences about objects outside the representative set.

There are numerous other mathematical distribution available for study.

Rule of Large Numbers

The rule of large numbers is a simple relationship between the distribution of a sample set, and the distribution of the entire class of objects. In general, larger sample sizes better represent the class as a whole.

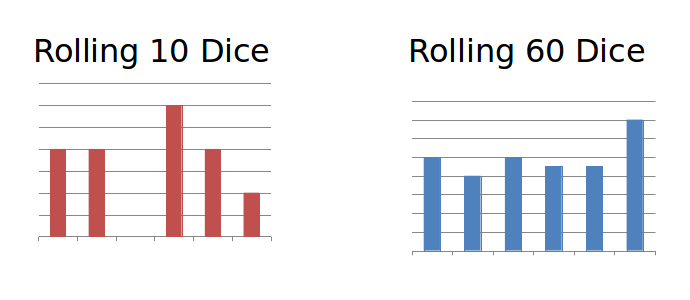

For a dice has an even probability distribution. In theory each side of a dice has equal probability of coming up if rolled. If I were to roll ten dice, then the probability distribution will probably not come up even with some outcomes coming up much more frequently then others. If I were to roll the same dice sixty times, the distribution would be much more even. The rule of large numbers stats that the more I roll the dice, the closer the outcomes will be.

The P Value

Because statistics makes use of probabilities, there is always a chance that perceived relationships can emerge from data where no relationships actually exists. Most statistical methods will produce a P-value which is effectively the probability of such a coincidence. A low P value indicates a high confidence that the outcome does not represent random fluctuations in the data. High p-values represent low confidence and indicate no relationship beyond simple noise.

It is important to pick a P value prior to performing any statistical analysis. In general a P value less then 5% is desired; however, for important or sensitive data P values of 1% or lower are generally desired.

Interpreting Correlation

Statistics never produce a causal model of anything that it analyses. A strong statistical relationship between two data sets does not imply that one causes the other. It only implies that one is a good predictor of the other, or that these two values are somehow related. Statistical relationships cannot be used to prove that one object is causally linked to another object; only that these two objects are somehow related.

Examples

T - Test

One statistic useful in text analysis is the average length of word that is used. Similar vocabularies will generally produce similar distributions in the character length of a word. A test for authorship, for example, could assume that all works by the same author would have a similar vocabulary and therfore a similar average word length. T-Tests are a statistical method that compares the mean of two sample sets and asks if these two sets represent the same distribution.

Using R code (using openNLP package)

#import two books from project Gutenburg pride <- scan(file="http://www.gutenberg.org/cache/epub/1342/pg1342.txt", what='char', sep="\n") flat <- scan(file="http://www.gutenberg.org/cache/epub/97/pg97.txt", what='char', sep="\n") #tokenize sentances pride.sen <- sentDetect(pride) flat.sen <- sentDetect(flat) #get character count for each word pride.nchar <- nchar(pride.sen) flat.nchar <- nchar(flat.sen) #perform T-test t.test(pride.nchar, flat.nchar)

Which produces as output.

data: pride.nchar and flat.nchar t = -8.1743, df = 1713.104, p-value = 5.726e-16 alternative hypothesis: true difference in means is not equal to 0 95 percent confidence interval: -39.28293 -24.07959 sample estimates: mean of x mean of y 125.7389 157.4202

The P value in this case is much lower then 1% so there is a strong statistical difference between the average sentance length of 'Pride and Prejudice' and 'Flatland'.